These days, when AI comes up in conversation, everyone says, "Now you can code and create apps without knowing much about programming, as long as you have an idea, you can launch it right away."

To some extent, that's true. But the problem is that anyone can create, but no one takes responsibility.

AI is designed to produce results as quickly and efficiently as possible.

However, security is inherently a slow, tedious, and meticulous area that requires thorough verification. So, the two are unlikely to align well.

The barrier to coding has essentially collapsed. In the past, a project would barely run with a few developers, but now it can be created in app form by one person in just a day.

Solo developers, indie apps, and side projects are pouring in. On the surface, it looks like the democratization of creation.

But when you enter the app store, the feeling is a bit different. Similar AI apps, just different names for clone services, haha.

They have almost no functionality, but their descriptions sound impressive. Is this really innovation or just garbage? It's ambiguous.

Ultimately, platforms are gradually losing their filtering capabilities. From a user's perspective, it's becoming more confusing to choose what to download.

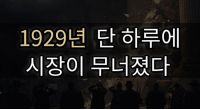

This situation is reminiscent of the video game market in the 80s. It was thriving and then suddenly collapsed.

Once anyone could make a game, they started churning out anything.

The result was the Atari shock. The market collapsed due to oversupply, and consumers turned away.

The current AI app market is at that very beginning stage. There are already claims that spammy apps are flooding the app store.

The problem is that we are no longer at a level where people can filter through them one by one.

It is said that "vibe" is more important than logical design. If it feels good, just make it and see.

But who takes responsibility? Code verification? Security testing?

That's why we hear phrases like "the Wild West era of LLMs." The rules are loose, the guns are fast, and accidents are already happening.

When you look at the code generated by AI, it may look fine on the surface.

But inside, it pulls in external data, has a weak authentication structure, and security holes are a basic option.

The time it takes to review this and elevate it to a commercial service level is still long. Skipping this step leads to bugs and errors as a bonus.

Ultimately, anyone can create now, but no one takes responsibility.

In the meantime, low-quality apps and security incidents are naturally increasing.

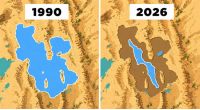

AI will continue to get faster. But security will still be slow.

As this gap widens, I believe the next "shock" could come sooner than expected.

Story Bank |

Story Bank |

Dallas Cowboys |

Dallas Cowboys |

Time is GOLD |

Time is GOLD |

Moo Tang Clan |

Moo Tang Clan |

AliAluAlo Blog |

AliAluAlo Blog |

Tarzan's Joyful Imagination |

Tarzan's Joyful Imagination |

my town K blog |

my town K blog |