The terms 64-bit and 128-bit refer to the size of data and memory address range that a computer can handle at once.

In simple terms, 64-bit or 128-bit can be thought of as the weight a computer can lift at one time, similar to muscle size. A larger muscle can lift heavier weights, but that doesn't mean the person suddenly becomes smarter. The same goes for computers. A 64-bit computer means it can handle numbers and memory addresses of 64 bits at a time, but it doesn't imply that it is smarter in solving problems.

Numbers used in encryption, like RSA, are already much larger than the CPU bit count, such as 2048-bit or 4096-bit. Therefore, a 64-bit CPU also calculates these large numbers by breaking them into several pieces, and a 128-bit CPU would do the same.

The reason it takes a long time to decrypt is not because the computational units are small, but because the problem of prime factorization itself leads to an explosive increase in computation. Doubling the bit count does not suddenly reduce a calculation that takes billions of years to just a few days.

If you really want to make decryption easier, it's not about increasing the bit count but changing the algorithm. The reason quantum computers are gaining attention is not because of larger CPU bit widths, but because they use entirely different computation methods.

Thus, even if a 128-bit computer comes out, current encryption remains secure. To understand why we are stuck in the 64-bit computer era and why prime calculations or decryption are difficult, we need to clarify the meaning of 64 bits.

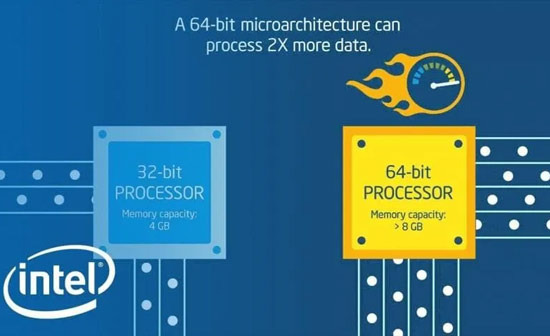

A 64-bit computer means that the CPU can handle an address space and basic integer size of 64 bits at once. This is about how much memory can be used and how large a chunk of data can be processed at once. The transition from 32-bit to 64-bit in general PCs was also to overcome the 4GB memory limit. This is a discussion about hardware architecture.

Encryption and prime problems exist on a completely different level. Public key encryption like RSA is not calculated using CPU unit operations like 64-bit or 128-bit. It is processed by breaking down 2048-bit or 4096-bit integers and repeating hundreds or thousands of operations.

In other words, whether the CPU is 64-bit or 128-bit, large number calculations still need to be broken down and computed multiple times. Therefore, increasing the CPU bit count does not suddenly make decryption easier.

So why not move to 128-bit or 256-bit CPUs? Because the practical benefits are minimal. The address space is already virtually infinite with 64 bits, and performance improvements come more from parallel processing, cache structure, and power efficiency than from increasing bit count. In encryption calculations, the algorithm and parallelization are much more important than the single-core bit width.

Thus, to make decryption easier, it is not about increasing the computer's bit count but completely changing the calculation method itself. The reason quantum computers are gaining attention is not because of larger bits, but because they compute in entirely different ways. Ultimately, the reason we are still using 64-bit computers is not because we cannot decrypt, but because there is almost no gain from increasing beyond that. The reason encryption is secure is not because computers are 64-bit, but because the mathematical problems are inherently too difficult to solve.

Yellow Mango |

Yellow Mango |

newyorker 101 |

newyorker 101 |

Gondola IT |

Gondola IT |

Glow Yo |

Glow Yo |

Hardworking CPA |

Hardworking CPA |

shinramen wang |

shinramen wang |

Things to Do to Buy a House |

Things to Do to Buy a House |

Encyclopedia of New York and Surrounding Areas |

Encyclopedia of New York and Surrounding Areas |

Berry News |

Berry News |

USA East News, Information |

USA East News, Information |