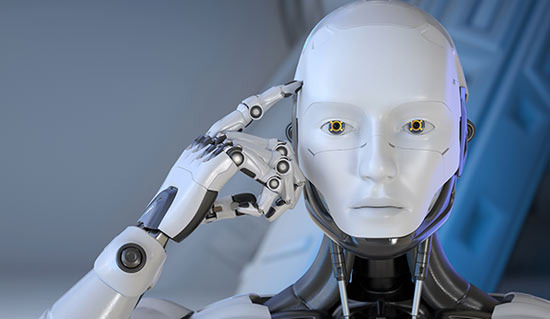

They affirm that my thoughts are correct, my plans are good, and they subtly push the ideas I already believe in. So, there are times when I think, "Wow, AI is really smart."

However, there's a problem here. That affirmation may not all be true.

This phenomenon is called hallucination. It refers to cases where AI generates very plausible statements that are actually incorrect.

On the internet, there's a saying that "people in their 40s and 50s are the easiest for AI to deceive."

However, this hasn't been scientifically proven yet. Research indicates that there isn't a definitive conclusion to support this claim. Nonetheless, various surveys and usage patterns somewhat explain why people in this age group might be more swayed by AI's sweet responses.

First, an interesting fact is that people in their 40s and 50s are not the generation that uses AI the most.

Actual surveys show that people in their 20s and 30s use it much more. Young people overwhelmingly dominate in usage. So, the explanation that "they use it a lot and are easily deceived" doesn't hold true.

So why does the discussion keep coming back to people in their 40s and 50s? The reason is that it's not about how much they use it, but rather in what contexts they use it.

People in this age group often hold different positions at work. They are frequently team leaders, managers, or decision-makers. They review reports, strategize, hire people, and make important judgments. Therefore, they often use AI responses not just for fun but for actual decision-making.

For example, if someone in their 20s misplaces trust in AI, it might just end in a personal mistake. But if someone in their 40s or 50s misplaces trust, it could influence the direction of the company in a meeting. This is why the illusion of pleasing AI can be more dangerous.

Another interesting study shows that the more people trust AI, the less they tend to verify information. Especially for those in their 40s and 50s, they have considerable experience in their fields. This leads to moments like, "My intuition is right, and AI says the same thing." This moment is considered risky. With experience and confidence, when AI echoes their thoughts, they may not feel the need to double-check.

And one important fact is that when AI is wrong, it doesn't make completely nonsensical mistakes. Instead, it often makes errors that seem plausible.

This makes it easier to be deceived. If it were completely absurd, one would immediately doubt it. But if it's something like, "Hmm... that seems right?" it's easy to overlook.

Especially for those in their 40s and 50s, they are busy. Right before a meeting, just before replying to an email, or at a crucial decision point, if AI throws out a plausible sentence, they might think, "This is good" and just use it. So, the reason this age group is easily deceived may not be due to naivety but rather because they are too busy.

There's also an emotional reason. Research shows that people over 50 often use AI as a counseling partner. There are issues that are awkward to discuss with others, like matters of face or problems they don't want to be judged on. They often confide these to AI.

AI always responds, doesn't get tired, and doesn't interrupt. So, there are times when AI feels more like a good listener than an expert. This leads people to trust pleasant responses more than accurate ones.

In summary, the reason people in their 40s and 50s are easily deceived by AI is not because they lack digital knowledge. Rather, it's a time when they have some technical understanding, bear many responsibilities, make numerous judgments, and are accumulating fatigue. Yet, AI always speaks politely and quickly, as if it's on their side.

Thus, experts advise not to use AI less, but to be more skeptical when AI aligns too well with their thoughts.

The truly dangerous hallucination may not be an absurd lie, but rather statements that are so plausible that they don't even raise suspicion.

Wicked - Fireyo |

Wicked - Fireyo |

moonshine 7 |

moonshine 7 |

Kevin Klin |

Kevin Klin |

bagel90 |

bagel90 |

Shinbaram Dr. Blog |

Shinbaram Dr. Blog |

Delicious Food Finder |

Delicious Food Finder |  cathywhite |

cathywhite |

Nanana Nanana Nanana |

Nanana Nanana Nanana |

Finding Superfoods |

Finding Superfoods |

U.S. Weather Bureau News |

U.S. Weather Bureau News |